Meta (formerly Facebook) proudly announced its latest achievement, the lip-reading speech-recognition AI, whose word error rate (WER) is already down by 75% – the best results in this field so far.

Effective communication involves speech, gestures, tone, and more – essentially, verbal and non-verbal elements. Until now, even the most advanced AI was only successful in recognizing verbal cues (in contrast to humans which use visual ones, like lip movement, facial expressions, and hand gestures, as key elements in language learning). But, thanks to Meta's Audio-Visual Hidden Unit BERT (AV-HuBERT) framework, which is learning to understand language by both listening and watching people communicate, this is about to change.

The latest Meta advancements

Kristen Morea, a Meta spokeswoman, reported that the company has made $50 million worth of investments in external programs so far to ensure that the Metaverse is built safely. She also pointed out that Meta has introduced four responsible innovation principles for development "with ethics, privacy, safety, and security at the forefront." Coming from a company that in the past has shown very little regard for the privacy and ethical concerns of its users, however, we can't say we are convinced. But let's see what Meta has been working on lately.

Lip-reading AI

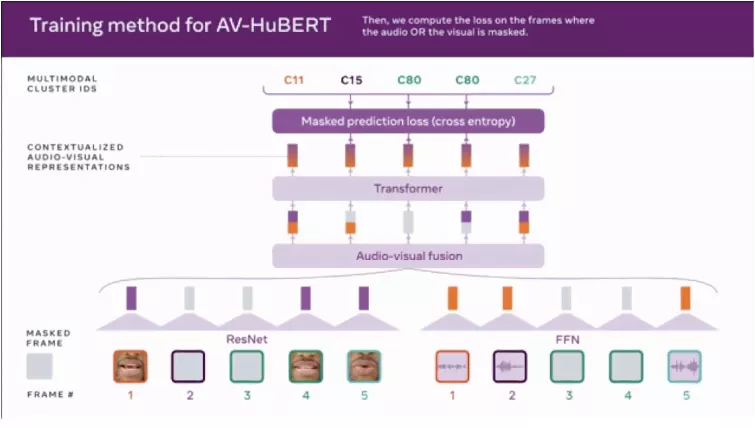

To develop its lip-reading AI, Meta uses AV-HuBERT, a unique multimodal learning system that combines audio and lip-movement cues to perceive language. Meta revealed that AV-HuBERT already captures "nuanced associations" between visual and auditory data, thanks to its ability to recognize the visual cues of speech (like lips and teeth movement) and pair them with incoming auditory information.

On top of that, AV-HuBERT works unsupervised or, more precisely, is self-supervised. It has mechanisms that enable it to teach itself to classify unlabeled data – through data processing and learning from the inherent data structure.

This is a tremendous advancement compared to previous lip-reading models, such as those developed by the University of Oxford and Alphabet; limited in vocabulary range and unable to process the audio of speakers in videos. At best, these were repeatedly trained on labeled example data to draw the connection between the examples and related outputs. So, they would eventually write the output for "dog" when shown a picture of a Labrador (the example).

3D virtual offices

In the meantime, we also have McDannald, CEO of Environments, a so-called immersive work experience in virtual reality, testing its product – software that creates VR replicas of office interiors. At the moment, she has five employees working in a virtual office, using Meta's Oculus headset. Each of these employees has their personal avatar (that resembles them to some extent), and she can check-in at any moment by walking to their virtual office desks. Also, the avatars come with different icons above their heads depending on the occasion, like, for example, to mark a work anniversary – awfully reminiscent of the game called The Sims.

You can't get locked in the Metaverse. You can get out of the Metaverse any time you like.

Not only can all this take a very creepy turn, depending on the application of VR products and those in charge of their realization, but it comes with some great dangers of privacy intrusions. VR headsets can collect more data about us than any traditional screening method that has existed so far. This grants employers and companies access to the private data they can use for profiling and advertising – with increased risk of behavior (and even mind) monitoring.

The shortcomings and hidden dangers of Meta products

AV-HuBERT outperformed all the former audiovisual speech recognition systems, even though, so far, it worked with only one-tenth of the amount of data its predecessors used – with only 26.9% WER (word error rate). Besides, it is 50% better than all existing audio-only models for deciphering the content of a speech amid loud background noise.

Its creators claim that AV-HuBERT could have many noble uses in the future, such as creating conversational models for low-resource languages or developing speech recognition systems for people with speech impairments. However, many researchers, and an AI ethicist at the University of Washington, Os Keyes, have already pointed out that these claims are unjustified.

It seems kind of ironic to manage to build software for speech recognition that depends on lip reading, and is likely to have inaccuracies when pointed at ... deaf people.

Similar conclusions were drawn by many scholars and researchers that specialize in Down syndrome, Parkinson's disease, and stroke cases – AV-HuBERT probably won't be effective in these cases because people with these conditions, most likely, won't have the same facial expressions as neurotypical people.

Even more unsettling than the mentioned shortcomings of the speech-recognition AI that Meta is developing are the hidden dangers of this technology. Imagine how easy it would be for threat actors to gather data from your most sensitive conversations by simply installing a video-only camera. The same goes for Meta VR products that have the power of unrestricted data gathering. Extortion, psychological manipulation, and worse are just some examples from a long list of horrifying potential scenarios.

From an ethical viewpoint

As the previously inaccessible biometric information is now becoming available to our employers, random companies, governments, and possibly even threat actors, the most worrying part, still, is that Meta is the one holding the monopoly in these data-gathering technological advancements.

So far, we can't say that Meta, or Facebook (we still aren't convinced that a name change can erase a problematic history), has reasonably used its findings and resources or has inspired much confidence regarding handling user data. Lawsuit after lawsuit has made the company reevaluate its innovation principle, change business strategies, and rebrand, but will Meta respect its user's privacy rights in the future? With the reach of these new technological advancements, we can only hope so.